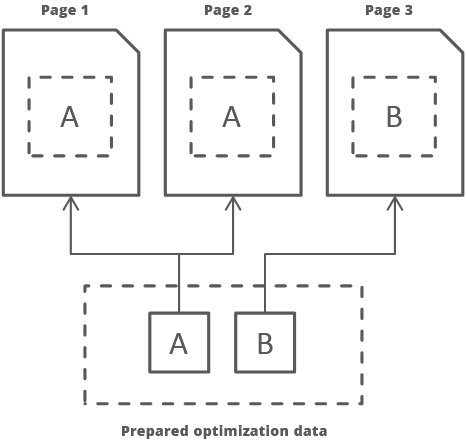

It is a special developed proprietary technology that works while optimization process and generates specific optimization’s data for particular page structure and uses it in optimizations of other pages with the same structure. This allows to reduce the time to optimize each page, which, in turn, has a positive effect on the server load and on a time of updating actual content.

The relates settings can be found here.

Details#

When page is being analyzed it extracts a unique page structure by a special algorithm. Then, if already prepared optimization data is found for this structure it is used for further optimization and don’t spend time for deep optimization’s analysis. In result, it saves a lot of time and allows fresh content to be shown much faster (about x10 times).

But, when already prepared optimization data is not found then page is fully processed and in result prepared data accumulated for particular page structure.

Fast processing#

It is additional algorithm that uses page structure for optimization instead of full page’s content. It increases processing time again (about x2-10 times, especially on pages with a huge DOM size), but can produce larger for about 5-20% styles assets’ size. It is allowed due to the most non-critical part is still separated and can be delayed.